Data Pipeline Engineering

Pipelines That Don't Break at

Month-End.

-

ISO 27001 Certified

-

ISO 22301 Certified

- DORA Aligned

-

GDPR Compliant

- Start in 7–10 days

- From €5–6k/mo

HST Solutions builds production-grade data pipelines using Apache Airflow, dbt, and Spark for organisations across Ireland, the UK, and Europe, with project management, architecture reviews, and DevOps included as standard. ISO 27001 certified.

Why Teams Bring Us In

- Pipelines break under load

- Nobody owns data quality

- Hiring takes 6+ months

- Single contractors get stuck

Who brings in a Managed Data Pipeline Engineer

Fund administrators with legacy SSIS estates

Operations teams drowning in manual reconciliation

CTOs with open senior data engineer roles for 6+ months

Companies scaling beyond what their current pipelines can handle

Regulated firms needing audit trails and data lineage

If that sounds familiar, we've solved it before.

What is Data Pipeline Engineering?

Data pipeline engineering is the discipline of designing, building, and maintaining automated systems that extract data from source systems, transform it into usable formats, and load it into target destinations like data warehouses, data lakes, or analytics platforms.

Modern data pipelines use tools like Apache Airflow for orchestration, dbt for transformation, and Apache Spark for large-scale processing. They handle both batch processing (scheduled loads) and real-time streaming (continuous data flow).

HST provides senior data pipeline engineers who build production-grade pipelines not POCs that never ship. Our engineers come backed by PM, architecture, and DevOps support, so pipelines actually get deployed and maintained.

Technology Stack

We don't push a specific stack. We work with what you have and recommend based on your actual requirements.

WHAT YOU GET

Data Pipeline Pod

Senior Data Pipeline Engineer

- Python

- SQL

- Spark

- Airflow

- dbt

- batch and streaming

- AWS Glue

- Azure Data Factory

- Databricks

Project Manager

- Designs system architecture

- Comms

- Weekly status

- Risk tracking

- sTakeholder management

Architecture Reviews

- Included 2h/week design reviews

- Schema design

- Performance optimisation

DevOps assist

- Included CI/CD for data pipelines

- Automated testing

- Monitoring and alerting

SLA & Compliance

- Weekly demos

- 48-hour remediation on issues we touch

- ISO 27001 & 22301

- DORA aligned

- GDPR

- Full IP assignment

One monthly price. One embedded seat. A full bench behind it.

What We Build

- ETL & ELT Pipelines

- Source extraction (databases, APIs, files, SaaS platforms)

- Data transformation (cleaning, deduplication, normalisation)

- Load orchestration (incremental, full refresh, CDC)

- Error handling, retry logic, alerting

- Pipeline Orchestration

- Apache Airflow DAGs

- Azure Data Factory pipelines

- AWS Step Functions

- Prefect, Dagster implementations

- Data Transformation

- dbt models (staging, intermediate, marts)

- Spark transformations (PySpark, Scala)

- SQL-based transformations

- Data quality checks (Great Expectations, dbt tests)

- Pipeline Modernisation

- Legacy SSIS → modern ELT (Airflow, dbt, ADF)

- Stored procedure hell → modular transformation layers

- Manual Excel processes → automated pipelines

- On-premise → cloud-native (AWS Glue, Azure Synapse)

Case Study

Waystone — Fund Administration Pipelines

- Challenges

- Waystone's data pipelines were built on legacy SQL Server and SSIS designed years ago for a fraction of current data volumes.

- Month-end processing was unreliable, reports didn't reconcile, and the team spent more time firefighting than building.

- What We Built

- Migrated from SSIS to modern ELT architecture (dbt + Airflow on AWS)

- CDC pipelines using AWS DMS for real-time data replication

- Data quality framework with automated checks and alerting

- Audit trail and lineage documentation for regulatory compliance

- The outcome

- Month-end processing time reduced by 70%

- Zero pipeline failures during regulatory reporting windows

- Full data lineage for Central Bank inspection readiness

- Team now builds features instead of fixing legacy code

Companjon — Insurance Data Ingestion

- The challenge

- Companjon needed to ingest data from multiple insurance partners in various formats each with different schemas, delivery methods, and quality issues. Manual ingestion couldn't scale.

- What we built

- Kafka-based ingestion layer mirroring 100% of API traffic

- Automated schema detection and mapping

- Data quality checks at ingestion time

- Databricks bronze/silver/gold medallion architecture

- The outcome

- Zero latency impact on production APIs

- Automated ingestion for new partners (hours, not weeks)

- Complete audit trail for compliance

- Near real-time dashboards for business intelligence

Trusted by leading organisations

Why marketplaces can't deliver Kubernetes for enterprises

| Big 4 / Consultancies | Solo Contractors | Offshore Teams | HST | |

|---|---|---|---|---|

| Pipeline expertise |

|

|

|

|

| Support structure |

|

|

|

|

| Accountability |

|

|

|

|

| Speed to start |

|

|

|

|

| Pricing |

|

|

|

|

How We Work

Embed

Assess & Plan

Build

Optimise

Pricing

Precision Pod

€5–6k/month

Single seat

- 1 senior data engineer

- Embedded in your team PM

- Architecture

- DevOps included Best for: Focused pipeline work, single domain

Pair Pod

€10–11k/month

Two engineers

- 2 engineers working together PM

- Architecture

- DevOps included Best for: Large-scale pipeline modernisation, complex domains

Mini-Team

€15–16k/month

Three engineers

- 3 engineers with mixed specialities PM

- Architecture

- DevOps included Best for: Full data platform builds

- Multiple workstreams

- All plans include

Weekly demos, architecture reviews, SLAs, ISO 27001 certified, full IP assignment.

Proof that Reduces Risk

Frequently asked questions

What's the difference between ETL and ELT?

ETL (Extract, Transform, Load) transforms data before loading into the target. ELT (Extract, Load, Transform) loads raw data first, then transforms inside the data warehouse. ELT is generally preferred for modern cloud data warehouses like Snowflake and Databricks because it’s more flexible and leverages cheap cloud compute. HST builds both we recommend based on your specific requirements.

How long does it take to build a data pipeline?

Simple pipelines (single source, basic transformation) can be production-ready in 1-2 weeks. Complex pipelines (multiple sources, business logic, data quality checks) typically take 4-8 weeks. Full pipeline modernisation projects (replacing legacy SSIS, migrating to cloud) take 3-6 months depending on scope.

Do you work with our existing tools?

Yes. We mirror your stack if you’re on Azure with Data Factory, we work in Azure with Data Factory. We don’t force technology changes that add risk. We modernise incrementally, proving value before expanding scope.

What about data quality?

Data quality is built into every pipeline we build. We implement automated checks using tools like Great Expectations or dbt tests, set up alerting for failures, and create runbooks for common issues. Poor data quality is usually a pipeline design problem, not a tooling problem.

Can you help with existing pipelines that keep breaking?

Absolutely. About 40% of our engagements start with stabilising existing pipelines before building new ones. We audit what you have, identify failure points, and fix them while documenting everything so your team can maintain it.

How fast can you start?

7–10 business days from signed agreement to engineer embedded in your team.

Give us 20 minutes. We'll show you what reliable pipelines look like.

Find The Perfect Solutions For Your Project

Managed Team

Your product, our dedicated team. From concept to conception, we handle it all.

Staff Augmentation

Need extra hands? Our experts seamlessly join your team, providing the skills you need, when you need them.

Fixed Cost

One Team, One Dream

Build Trust with Every Interaction

Improve Everything

Own It

Obsessed: Over Results

Proven Excellence

Partners in Precision

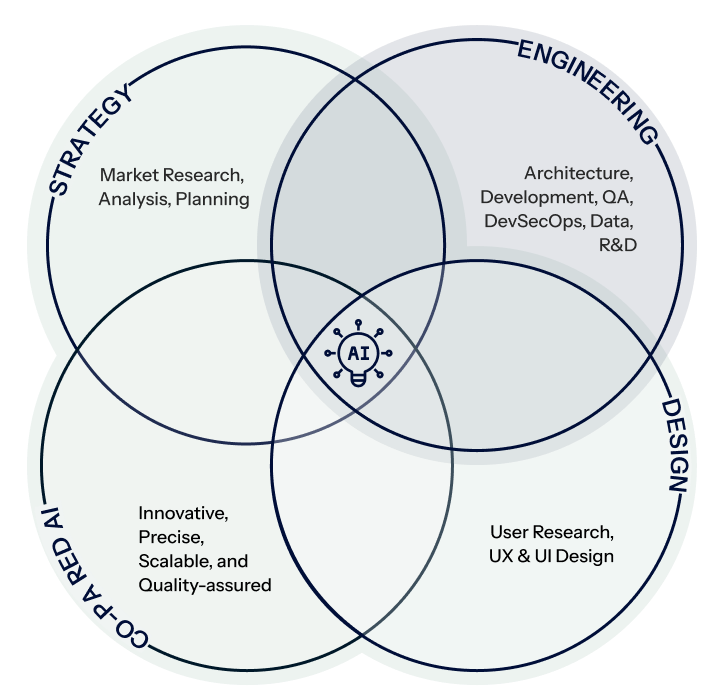

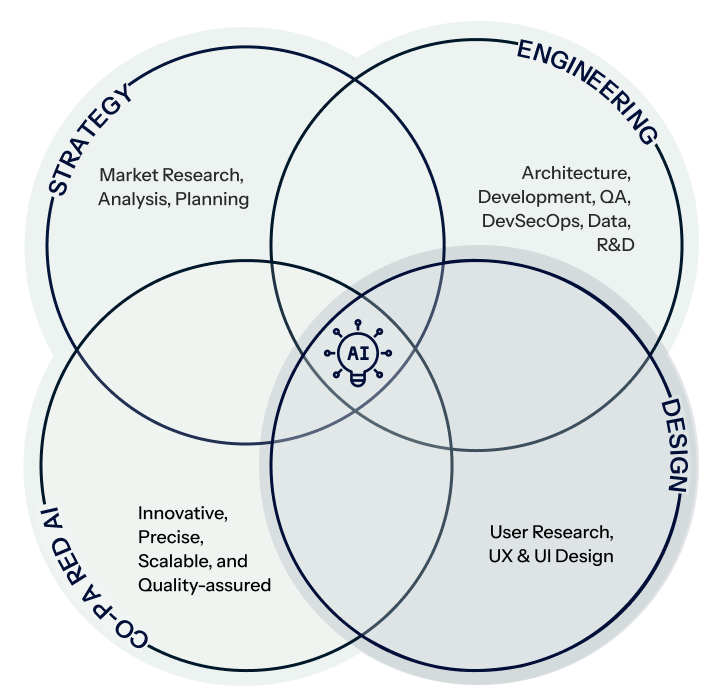

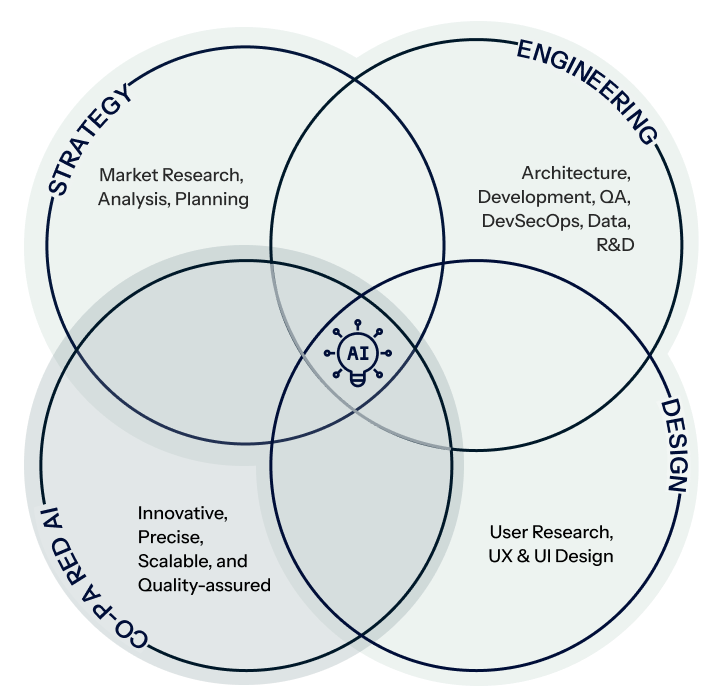

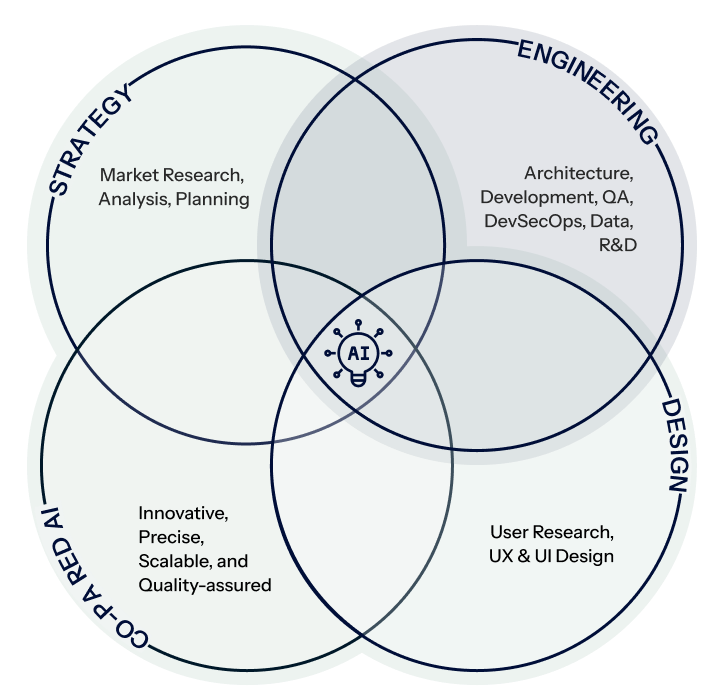

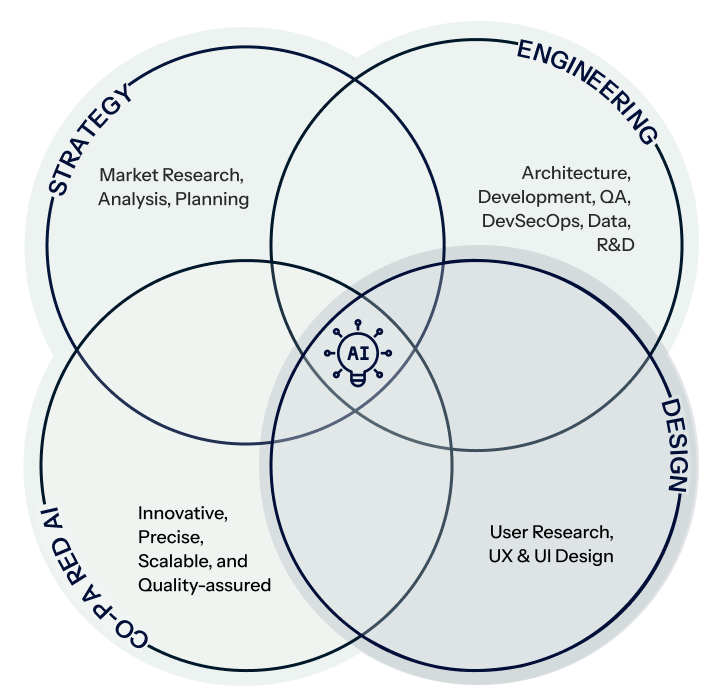

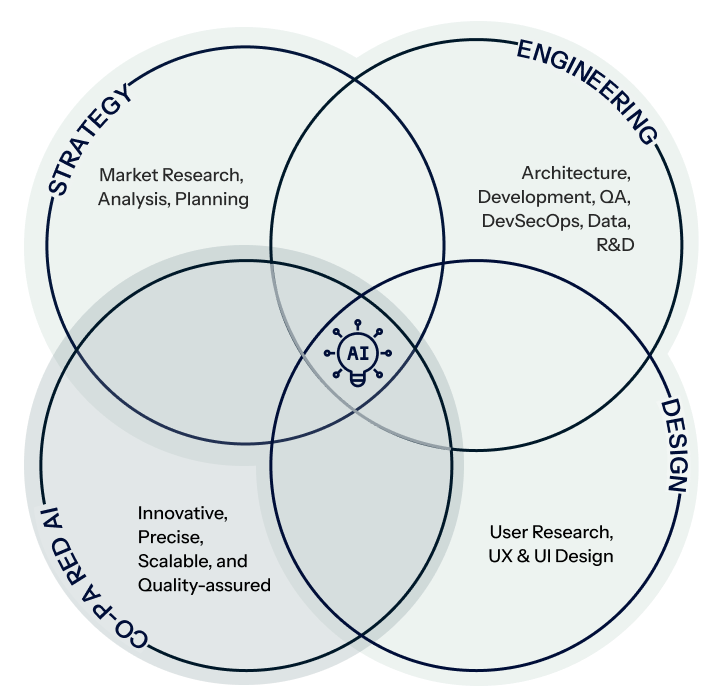

Who Are We ?

Creativity, Efficiency, & Advanced AI

Strategy

Engineering

Design

Co-paired AI

Strategy

Engineering

Design

Co-paired AI

Contact Us

Tell us about your custom software project

Let our team, be your team

Get a technical conversation about your project not a slide deck. Whether you need AI integration, a software engineering team, or a data platform, we’ll tell you honestly if we’re the right fit.