Real-Time Data Streaming

From Batch to Real-Time.

Without Breaking Production.

-

ISO 27001 Certified

-

ISO 22301 Certified

- DORA Aligned

-

GDPR Compliant

- Start in 7–10 days

- From €5–6k/mo

HST Solutions builds real-time streaming systems using Apache Kafka, Confluent, Kinesis, and Flink for organisations across Ireland, the UK, and Europe, delivering event-driven architectures with zero latency impact. Proven in insurance and financial services. ISO 27001 certified.

Why teams bring us in

- Batch processing can't keep up

- Real-time is harder than expected

- Kafka expertise is rare

- POCs don't reach production

You don’t need another Kafka tutorial. You need streaming

pipelines running in production, handling real load.

Who brings in a Managed Streaming Engineer

Insurtechs and fintechs needing real-time partner data

Companies building event-driven microservices

Organisations with real-time analytics requirements

Teams who tried Kafka and got stuck

Enterprises modernising from batch to streaming

If that sounds familiar, we've solved it before.

What is Real-Time Data Streaming?

Real-time data streaming is the continuous processing of data as it’s generated, rather than collecting it in batches for periodic processing. Streaming platforms like Apache Kafka enable organisations to capture events (transactions, clicks, sensor readings) and process them in milliseconds rather than hours.

Event-driven architecture uses streaming as the backbone for communication between services, enabling loose coupling, scalability, and real-time responsiveness. Modern streaming stacks combine Kafka for ingestion with Spark Streaming, Flink, or Kafka Streams for processing.

HST provides senior streaming engineers who build production-grade streaming pipelines — not POCs that demo well but fail under load. We’ve built systems processing millions of events daily with zero latency impact on production APIs.

Technology Stack

We don't push a specific stack. We work with what you have and recommend based on your actual requirements.

WHAT YOU GET

Senior Streaming Engineer

- Apache Kafka

- Spark Streaming

- Kafka Streams

- Flink; event-driven architecture;

- AWS Kinesis

- Azure Event Hubs.

Project Manager

- Included Scope

- Comms

- Weekly Status

- Risk tracking

- Stakeholder management.

Architecture Reviews

- Included 2h/week Design Reviews

- Event schema design

- Performance optimisation.

DevOps assist

- Included Kafka cluster management

- Monitoring

- CI/CD for streaming applications.

SLA & Compliance

- Weekly Demos

- 48-hour remediation on issues we touch

- ISO 27001 & 22301

- DORA Aligned

- GDPR

- Full IP Assignment

One monthly price. One embedded seat. A full bench behind it.

What we build

- Kafka Implementation

- Kafka cluster setup and configuration (on-premise, cloud, managed)

- Topic design and partitioning strategy

- Schema Registry implementation (Avro, Protobuf, JSON Schema)

- Kafka Connect for source/sink integration

- Kafka Streams applications

- Stream Processing

- Apache Spark Streaming pipelines

- Apache Flink real-time processing

- Kafka Streams stateful processing

- Complex event processing (CEP)

- Real-time aggregations and windowing

- Event-Driven Architecture

- Event schema design and evolution

- Microservices event backbone

- Event sourcing and CQRS patterns

- Saga orchestration

- Dead letter queue handling

- Streaming Analytics

- Real-time dashboards

- Fraud detection pipelines

- Anomaly detection

- Live operational monitoring

- Stream-to-data-lake integration

Case Study

Companjon — Real-Time Insurance Partner Streams

- Challenge

- Companjon integrates with multiple insurance partners, each sending transaction data via APIs.

- The business needed real-time visibility into partner activity for operations and compliance but couldn't impact the performance of production APIs that partners depend on.

- What We Built

- Kafka mirroring architecture capturing 100% of API traffic

- Zero latency impact on production systems traffic mirrored asynchronously

- Databricks bronze/silver/gold medallion architecture for processing

- Automated schema detection for varying partner formats

- Near real-time dashboards for business intelligence

- The outcome

- 100% of API traffic captured with zero performance impact

- Near real-time visibility (seconds, not hours) into partner activity

- Audit trail for regulatory compliance

- Foundation for real-time fraud detection and anomaly alerting

- New partners onboarded in hours, not weeks

Streaming Patterns We Implement

- Pattern 1: CDC to Data Lake

- Pattern 2: Event Backbone for Microservices

- Pattern 3: Real-Time Analytics

Stream events through processing layer (Spark, Flink) to real-time dashboards. Fraud detection, operational monitoring, live KPIs. Replace “yesterday’s data” with “right now.”

- Pattern 4: API Traffic Mirroring

Capture API traffic without impacting production performance. Async mirroring to Kafka enables analytics, testing, and compliance without touching your production path.

- Pattern 5: Stream Processing + Batch

ambda or Kappa architecture combining real-time streams with batch processing. Best of both worlds speed for recent data, completeness for historical.

Why companies choose HST over alternatives

| Big 4 / Consultancies | Kafka Managed Services | Solo Contractors | HST | |

|---|---|---|---|---|

| Streaming expertise |

|

|

|

|

| Full solution |

|

|

|

|

| Support structure |

|

|

|

|

| Speed |

|

|

|

|

| Accountability |

|

|

|

|

| Pricing |

|

|

|

|

How We Work

Embed

Design

Build

Optimise

Pricing

Precision Pod

€5–6k/month

One engineer

- 1 senior streaming engineer,

- Embedded in your team PM

- Architecture

- DevOps included Best for: Focused streaming work, single use case

Pair Pod

€10–11k/month

Two engineers

- 2 engineers working together PM

- Architecture

- DevOps included Best for: Complex event-driven architecture

- Multiple streams

Mini-Team

€15–16k/month

Three engineers

- 3 engineers with mixed specialities PM

- Architecture

- DevOps included Best for: Full streaming platform builds

- high-scale systems

- All plans include

Weekly demos, architecture reviews, SLAs, ISO 27001 certified, full IP assignment.

Proof that Reduces Risk

Frequently asked questions

What's the difference between Kafka and traditional message queues?

Traditional message queues (RabbitMQ, ActiveMQ) are designed for point-to-point messaging message is consumed and gone. Kafka is a distributed log messages persist, multiple consumers can read independently, you can replay history. Kafka excels at high-throughput event streaming; traditional queues are better for task distribution. Most modern data architectures use Kafka for the event backbone.

Should we use managed Kafka (MSK, Confluent Cloud) or self-hosted?

Managed services reduce operational burden no cluster management, automatic scaling, built-in monitoring. Self-hosted gives more control and can be cheaper at scale. For most organisations, we recommend managed services unless you have specific security or customisation requirements. The engineering time saved is worth the premium.

How do we handle exactly-once processing?

Exactly-once semantics require careful design: idempotent producers, transactional consumers, and application-level deduplication. Kafka supports exactly-once within transactions, but end-to-end exactly-once requires your application logic to handle it. We design for at-least-once with idempotent processing more reliable in practice than trying to guarantee exactly-once.

Can you migrate us from batch to streaming incrementally?

Absolutely. We never recommend big-bang migrations. Typical approach: start with one high-value use case (e.g., one partner feed), prove it works, expand. You can run batch and streaming in parallel during transition. Most batch processes can be replaced incrementally over 3-6 months.

What about Kafka operations and monitoring?

We set up comprehensive monitoring from day one Prometheus/Grafana or Datadog for metrics, alerting for lag and failures, runbooks for common issues. If you want ongoing operational support, we can provide that. If you want to bring operations in-house, we document everything and train your team.

How fast can you start?

7–10 business days from signed agreement to engineer embedded in your team.

Give us 20 minutes. We'll show you what real-time actually looks like.

Find The Perfect Solutions For Your Project

Managed Team

Your product, our dedicated team. From concept to conception, we handle it all.

Staff Augmentation

Need extra hands? Our experts seamlessly join your team, providing the skills you need, when you need them.

Fixed Cost

One Team, One Dream

Build Trust with Every Interaction

Improve Everything

Own It

Obsessed: Over Results

Proven Excellence

Partners in Precision

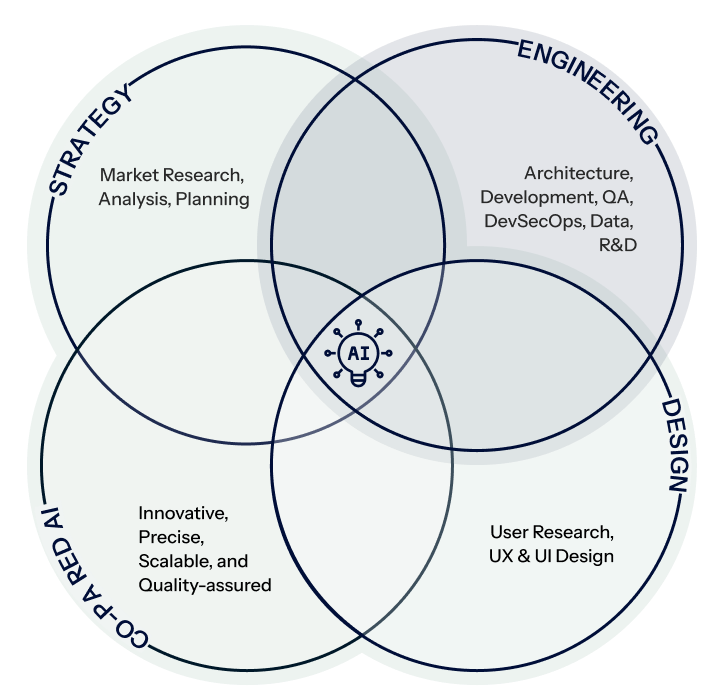

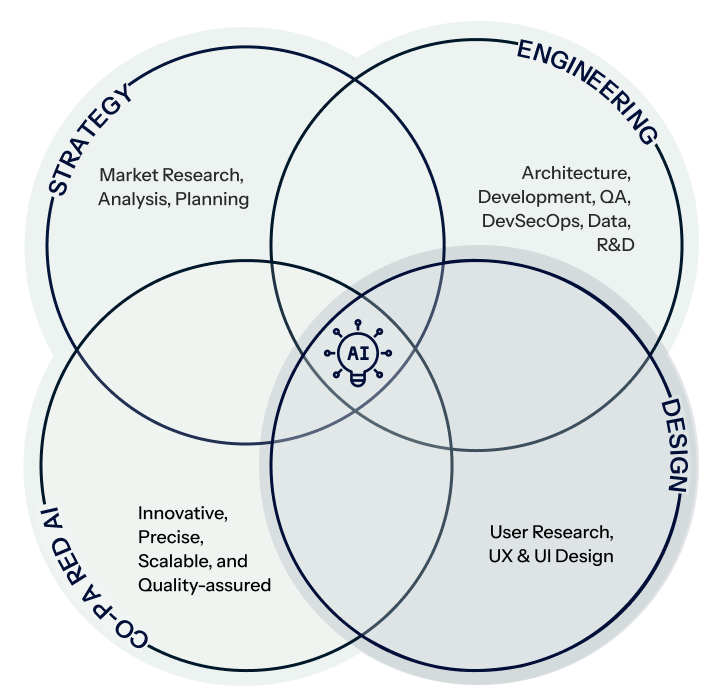

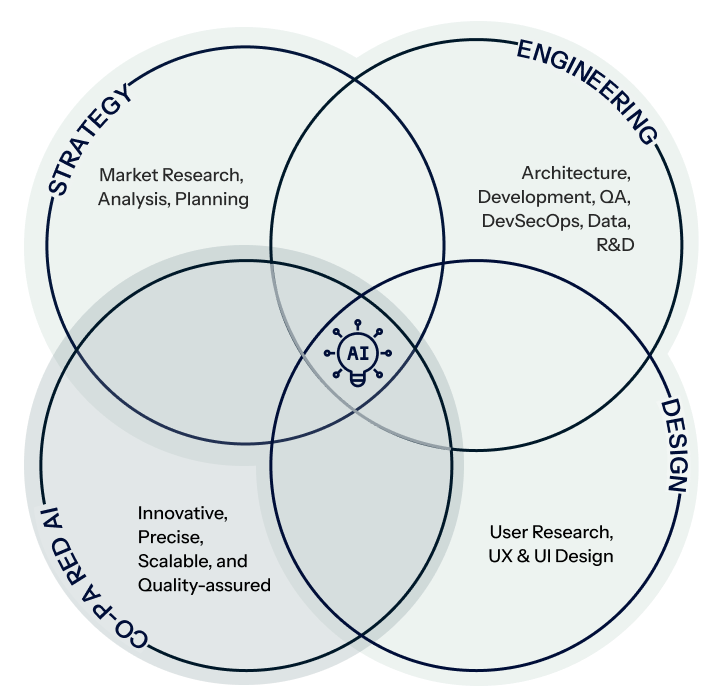

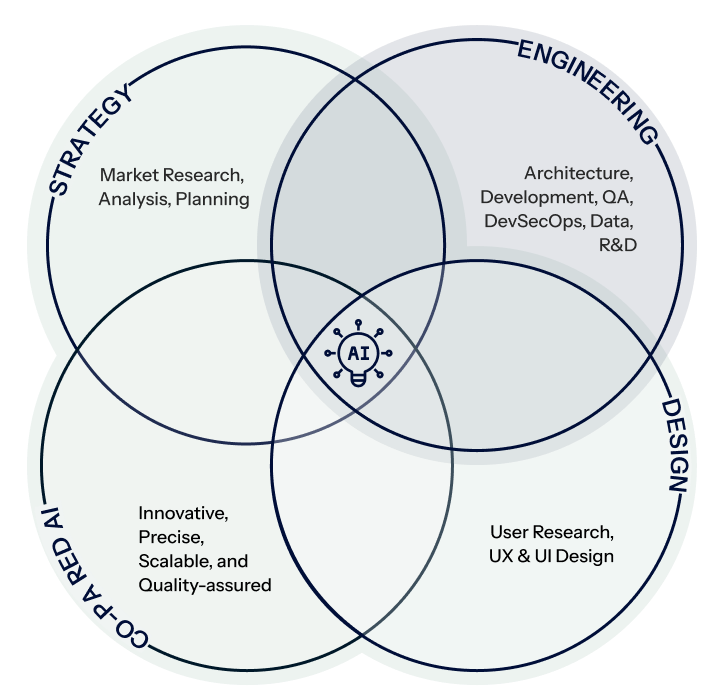

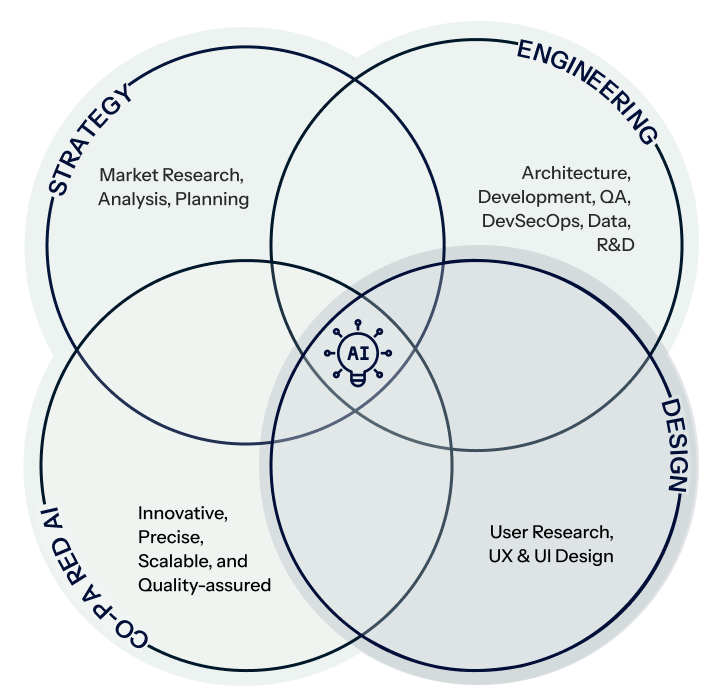

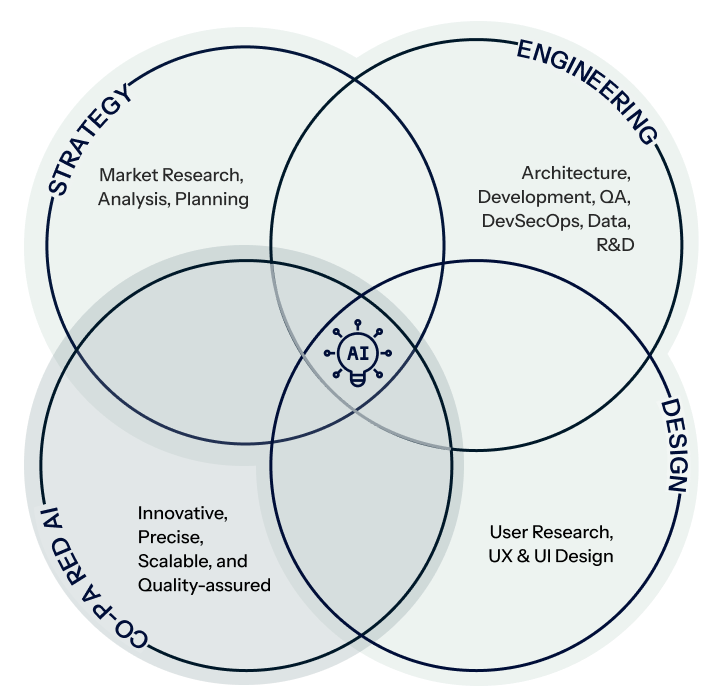

Who Are We ?

Creativity, Efficiency, & Advanced AI

Strategy

Engineering

Design

Co-paired AI

Strategy

Engineering

Design

Co-paired AI

Contact Us

Tell us about your custom software project

Let our team, be your team

Get a technical conversation about your project — not a slide deck. Whether you need AI integration, a software engineering team, or a data platform, we’ll tell you honestly if we’re the right fit.